| Age | Commit message (Collapse) | Author | Lines |

|---|

|

|

|

|

|

|

|

LLVM currently adds a redundant check for the returned option, in addition

to the `self.ptr != self.end` check when using the default

`Iterator::fold` method that calls `vec::IntoIter::next` in a loop.

|

|

`Iterator::try_fold` gets called on the underlying Iterator in

`SpecInPlaceCollect::collect_in_place` whenever it does not implement

`TrustedRandomAccess`. For types that impl `Drop`, LLVM currently can't

tell that the drop can never occur, when using the default

`Iterator::try_fold` implementation.

For example, the asm from the `unwrap_clone` method is currently:

```

unwrap_clone:

push rbp

push r15

push r14

push r13

push r12

push rbx

push rax

mov rbx, rdi

mov r12, qword ptr [rsi]

mov rdi, qword ptr [rsi + 8]

mov rax, qword ptr [rsi + 16]

movabs rsi, -6148914691236517205

mov r14, r12

test rax, rax

je .LBB0_10

lea rcx, [rax + 2*rax]

lea r14, [r12 + 8*rcx]

shl rax, 3

lea rax, [rax + 2*rax]

xor ecx, ecx

.LBB0_2:

cmp qword ptr [r12 + rcx], 0

je .LBB0_4

add rcx, 24

cmp rax, rcx

jne .LBB0_2

jmp .LBB0_10

.LBB0_4:

lea rdx, [rax - 24]

lea r14, [r12 + rcx]

cmp rdx, rcx

je .LBB0_10

mov qword ptr [rsp], rdi

sub rax, rcx

add rax, -24

mul rsi

mov r15, rdx

lea rbp, [r12 + rcx]

add rbp, 32

shr r15, 4

mov r13, qword ptr [rip + __rust_dealloc@GOTPCREL]

jmp .LBB0_6

.LBB0_8:

add rbp, 24

dec r15

je .LBB0_9

.LBB0_6:

mov rsi, qword ptr [rbp]

test rsi, rsi

je .LBB0_8

mov rdi, qword ptr [rbp - 8]

mov edx, 1

call r13

jmp .LBB0_8

.LBB0_9:

mov rdi, qword ptr [rsp]

movabs rsi, -6148914691236517205

.LBB0_10:

sub r14, r12

mov rax, r14

mul rsi

shr rdx, 4

mov qword ptr [rbx], r12

mov qword ptr [rbx + 8], rdi

mov qword ptr [rbx + 16], rdx

mov rax, rbx

add rsp, 8

pop rbx

pop r12

pop r13

pop r14

pop r15

pop rbp

ret

```

After this PR:

```

unwrap_clone:

mov rax, rdi

movups xmm0, xmmword ptr [rsi]

mov rcx, qword ptr [rsi + 16]

movups xmmword ptr [rdi], xmm0

mov qword ptr [rdi + 16], rcx

ret

```

Fixes #120493

|

|

LLVM does not know that the multiplication never overflows, which causes

it to generate unnecessary instructions. Use `usize::unchecked_mul`, so

that it can fold the `dst_cap` calculation when `size_of::<I::SRC>() ==

size_of::<T>()`.

Running:

```

rustc -C llvm-args=-x86-asm-syntax=intel -O src/lib.rs --emit asm`

```

```rust

pub struct Foo([usize; 3]);

pub fn unwrap_copy(v: Vec<Foo>) -> Vec<[usize; 3]> {

v.into_iter().map(|f| f.0).collect()

}

```

Before this commit:

```

define void @unwrap_copy(ptr noalias nocapture noundef writeonly sret([24 x i8]) align 8 dereferenceable(24) %_0, ptr noalias nocapture noundef readonly align 8 dereferenceable(24) %iter) {

start:

%me.sroa.0.0.copyload.i = load i64, ptr %iter, align 8

%me.sroa.4.0.self.sroa_idx.i = getelementptr inbounds i8, ptr %iter, i64 8

%me.sroa.4.0.copyload.i = load ptr, ptr %me.sroa.4.0.self.sroa_idx.i, align 8

%me.sroa.5.0.self.sroa_idx.i = getelementptr inbounds i8, ptr %iter, i64 16

%me.sroa.5.0.copyload.i = load i64, ptr %me.sroa.5.0.self.sroa_idx.i, align 8

%_19.i.idx = mul nsw i64 %me.sroa.5.0.copyload.i, 24

%0 = udiv i64 %_19.i.idx, 24

%_16.i.i = mul i64 %me.sroa.0.0.copyload.i, 24

%dst_cap.i.i = udiv i64 %_16.i.i, 24

store i64 %dst_cap.i.i, ptr %_0, align 8

%1 = getelementptr inbounds i8, ptr %_0, i64 8

store ptr %me.sroa.4.0.copyload.i, ptr %1, align 8

%2 = getelementptr inbounds i8, ptr %_0, i64 16

store i64 %0, ptr %2, align 8

ret void

}

```

After:

```

define void @unwrap_copy(ptr noalias nocapture noundef writeonly sret([24 x i8]) align 8 dereferenceable(24) %_0, ptr noalias nocapture noundef readonly align 8 dereferenceable(24) %iter) {

start:

%me.sroa.0.0.copyload.i = load i64, ptr %iter, align 8

%me.sroa.4.0.self.sroa_idx.i = getelementptr inbounds i8, ptr %iter, i64 8

%me.sroa.4.0.copyload.i = load ptr, ptr %me.sroa.4.0.self.sroa_idx.i, align 8

%me.sroa.5.0.self.sroa_idx.i = getelementptr inbounds i8, ptr %iter, i64 16

%me.sroa.5.0.copyload.i = load i64, ptr %me.sroa.5.0.self.sroa_idx.i, align 8

%_19.i.idx = mul nsw i64 %me.sroa.5.0.copyload.i, 24

%0 = udiv i64 %_19.i.idx, 24

store i64 %me.sroa.0.0.copyload.i, ptr %_0, align 8

%1 = getelementptr inbounds i8, ptr %_0, i64 8

store ptr %me.sroa.4.0.copyload.i, ptr %1, align 8

%2 = getelementptr inbounds i8, ptr %_0, i64 16

store i64 %0, ptr %2, align 8, !alias.scope !9, !noalias !14

ret void

}

```

Note that there is still one more `mul,udiv` pair that I couldn't get

rid of. The root cause is the same issue as #121239, the `nuw` gets

stripped off of `ptr::sub_ptr`.

|

|

This saves an extra load from memory.

|

|

Document overrides of `clone_from()` in core/std

As mentioned in https://github.com/rust-lang/rust/pull/96979#discussion_r1379502413

Specifically, when an override doesn't just forward to an inner type, document the behavior and that it's preferred over simply assigning a clone of source. Also, change instances where the second parameter is "other" to "source".

I reused some of the wording over and over for similar impls, but I'm not sure that the wording is actually *good*. Would appreciate feedback about that.

Also, now some of these seem to provide pretty specific guarantees about behavior (e.g. will reuse the exact same allocation iff the len is the same), but I was basing it off of the docs for [`Box::clone_from`](https://doc.rust-lang.org/1.75.0/std/boxed/struct.Box.html#method.clone_from-1) - I'm not sure if providing those strong guarantees is actually good or not.

|

|

|

|

|

|

to capacity. Also make Vec::insert use reserve_for_push.

|

|

Implement `Vec::pop_if`

This PR adds `Vec::pop_if` to the public API, behind the `vec_pop_if` feature.

```rust

impl<T> Vec<T> {

pub fn pop_if<F>(&mut self, f: F) -> Option<T>

where F: FnOnce(&mut T) -> bool;

}

```

Tracking issue: #122741

## Open questions

- [ ] Should the first unit test be split up?

- [ ] I don't see any guidance on ordering of methods in impl blocks, should I move the method elsewhere?

|

|

|

|

|

|

select Vec::from_iter impls in a const block to optimize compile times

Ignoring whitespace diffs should make this easier to review.

This relies on the trick from #122301

Split out from #120682

|

|

|

|

Move the length check to before using `index` with `ptr::add` to prevent

an out of bounds pointer from being formed.

Fixes #122760

|

|

|

|

Vec::try_with_capacity

Related to #91913

Implements try_with_capacity for `Vec`, `VecDeque`, and `String`. I can follow it up with more collections if desired.

`Vec::try_with_capacity()` is functionally equivalent to the current stable:

```rust

let mut v = Vec::new();

v.try_reserve_exact(n)?

```

However, `try_reserve` calls non-inlined `finish_grow`, which requires old and new `Layout`, and is designed to reallocate memory. There is benefit to using `try_with_capacity`, besides syntax convenience, because it generates much smaller code at the call site with a direct call to the allocator. There's codegen test included.

It's also a very desirable functionality for users of `no_global_oom_handling` (Rust-for-Linux), since it makes a very commonly used function available in that environment (`with_capacity` is used much more frequently than all `(try_)reserve(_exact)`).

|

|

Specifically, when an override doesn't just forward to an inner type,

document the behavior and that it's preferred over simply assigning

a clone of source. Also, change instances where the second parameter is

"other" to "source".

|

|

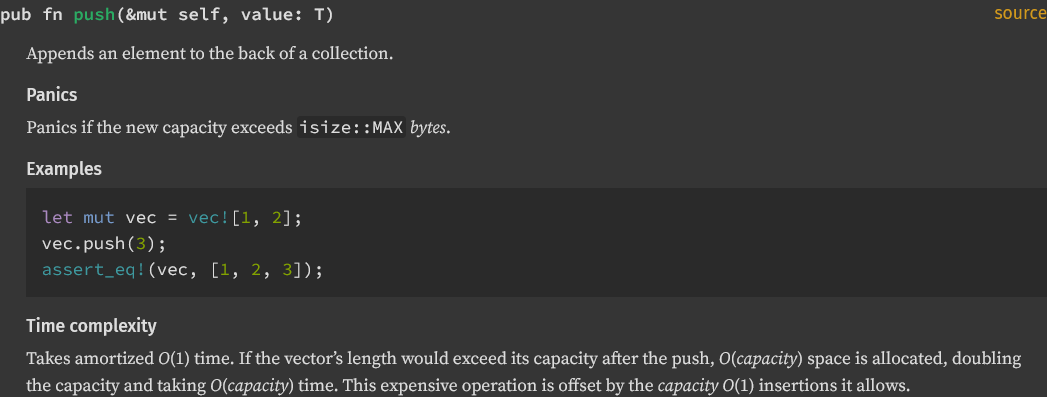

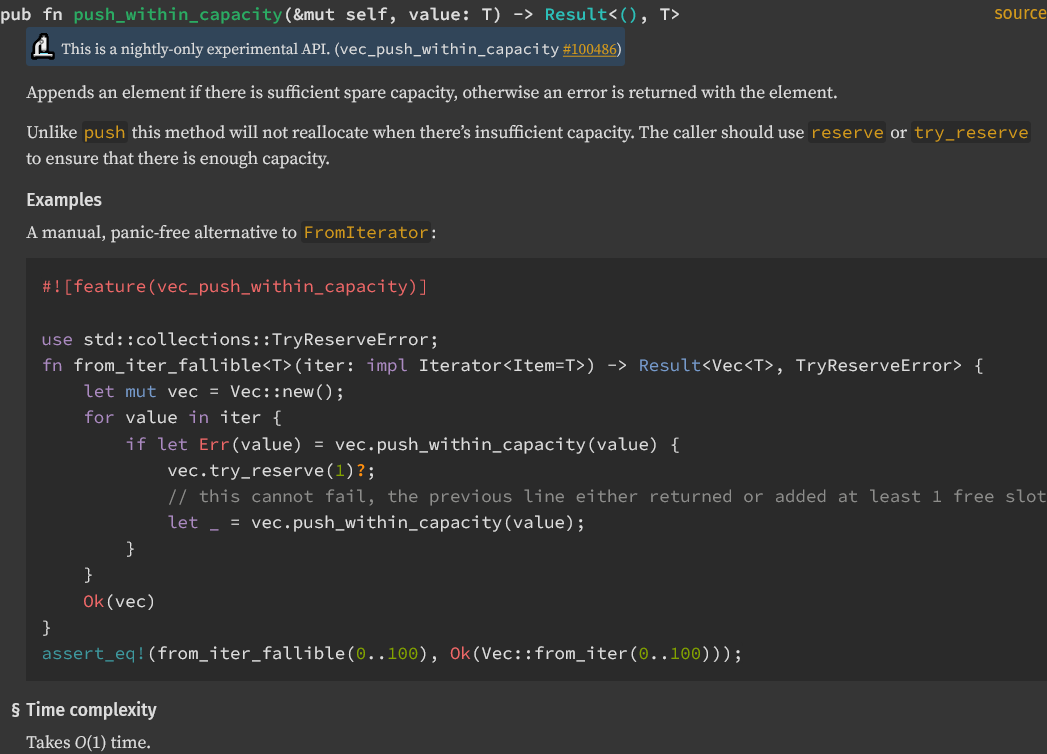

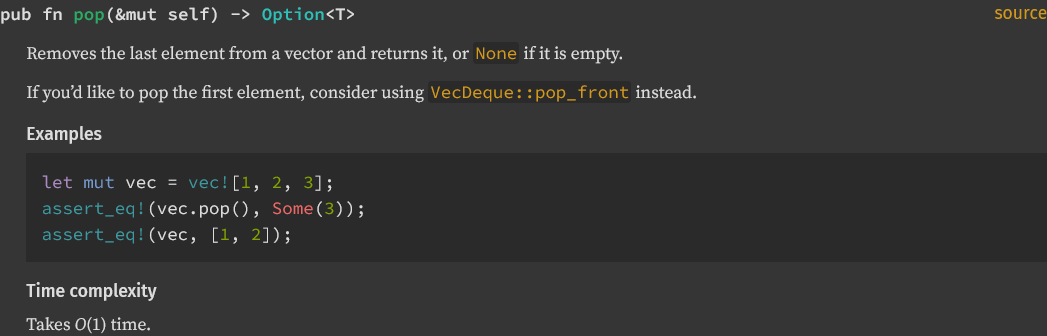

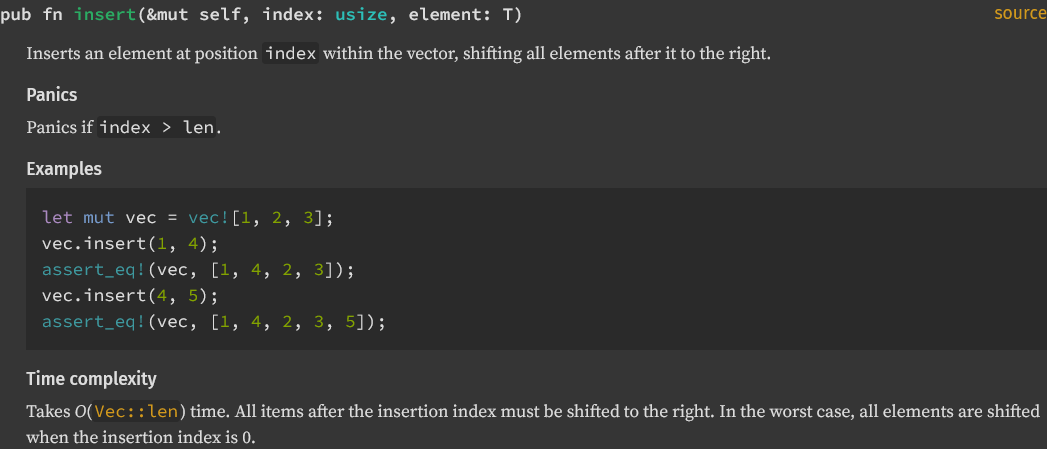

Add vector time complexity

Added time complexity for `Vec` methods `push`, `push_within_capacity`, `pop`, and `insert`.

<details>

<summary> Reference images </summary>

</details>

I followed a convention to use `#Time complexity` that I found in [the `BinaryHeap` documentation](https://doc.rust-lang.org/std/collections/struct.BinaryHeap.html#time-complexity-1). Looking through the rest of standard library collections, there is not a consistent way to handle this.

[`Vec::swap_remove`](https://doc.rust-lang.org/std/vec/struct.Vec.html#method.swap_remove) does not have a dedicated section for time complexity but does list it.

[`VecDeque::rotate_left`](https://doc.rust-lang.org/std/collections/struct.VecDeque.html#complexity) uses a `#complexity` heading.

|

|

#91913

|

|

|

|

argument order

|

|

|

|

Use generic `NonZero` everywhere in `library`.

Tracking issue: https://github.com/rust-lang/rust/issues/120257

Use generic `NonZero` everywhere (except stable examples).

r? `@dtolnay`

|

|

Help with common API confusion, like asking for `push` when the data structure really has `append`.

```

error[E0599]: no method named `size` found for struct `Vec<{integer}>` in the current scope

--> $DIR/rustc_confusables_std_cases.rs:17:7

|

LL | x.size();

| ^^^^

|

help: you might have meant to use `len`

|

LL | x.len();

| ~~~

help: there is a method with a similar name

|

LL | x.resize();

| ~~~~~~

```

#59450

|

|

|

|

Copying is O(n)—not the memory allocation

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Bump bootstrap compiler to just-built 1.77 beta

https://forge.rust-lang.org/release/process.html#master-bootstrap-update-t-2-day-tuesday

|

|

|

|

|

|

Co-authored-by: Josh Stone <cuviper@gmail.com>

|

|

|

|

impl `From<&[T; N]>` for `Cow<[T]>`

Implement `From<&[T; N]>` for `Cow<[T]>` to simplify its usage in the following example.

```rust

fn foo(data: impl Into<Cow<'static, [&'static str]>>) { /* ... */ }

fn main() {

foo(vec!["hello", "world"]);

foo(&["hello", "world"]); // Error: the trait `From<&[&str; 2]>` is not implemented for `Cow<'static, [&'static str]>`

foo(&["hello", "world"] as &[_]); // Explicit convertion into a slice is required

}

```

|

|

Remove special-case handling of `vec.split_off(0)`

#76682 added special handling to `Vec::split_off` for the case where `at == 0`. Instead of copying the vector's contents into a freshly-allocated vector and returning it, the special-case code steals the old vector's allocation, and replaces it with a new (empty) buffer with the same capacity.

That eliminates the need to copy the existing elements, but comes at a surprising cost, as seen in #119913. The returned vector's capacity is no longer determined by the size of its contents (as would be expected for a freshly-allocated vector), and instead uses the full capacity of the old vector.

In cases where the capacity is large but the size is small, that results in a much larger capacity than would be expected from reading the documentation of `split_off`. This is especially bad when `split_off` is called in a loop (to recycle a buffer), and the returned vectors have a wide variety of lengths.

I believe it's better to remove the special-case code, and treat `at == 0` just like any other value:

- The current documentation states that `split_off` returns a “newly allocated vector”, which is not actually true in the current implementation when `at == 0`.

- If the value of `at` could be non-zero at runtime, then the caller has already agreed to the cost of a full memcpy of the taken elements in the general case. Avoiding that copy would be nice if it were close to free, but the different handling of capacity means that it is not.

- If the caller specifically wants to avoid copying in the case where `at == 0`, they can easily implement that behaviour themselves using `mem::replace`.

Fixes #119913.

|

|

Use `assert_unchecked` instead of `assume` intrinsic in the standard library

Now that a public wrapper for the `assume` intrinsic exists, we can use it in the standard library.

CC #119131

|

|

Document some alternatives to `Vec::split_off`

One of the discussion points that came up in #119917 is that some people use `Vec::split_off` in cases where they probably shouldn't, because the alternatives (like `mem::take`) are hard to discover.

This PR adds some suggestions to the documentation of `split_off` that should point people towards alternatives that might be more appropriate for their use-case.

I've deliberately tried to keep these changes as simple and uncontroversial as possible, so that they don't depend on how the team decides to handle the concerns raised in #119917. That's why I haven't touched the existing documentation for `split_off`, and haven't added links to `split_off` to the documentation of other methods.

|

|

fix: Drop guard was deallocating with the incorrect size

InPlaceDstBufDrop holds onto the allocation before the shrinking happens which means it must deallocate the destination elements but the source allocation.

Thanks `@cuviper` for spotting this.

|

|

|

|

Remove alignment-changing in-place collect

This removes the alignment-changing in-place collect optimization introduced in #110353

Currently stable users can't benefit from the optimization because GlobaAlloc doesn't support alignment-changing realloc and neither do most posix allocators. So in practice it has a negative impact on performance.

Explanation from https://github.com/rust-lang/rust/issues/120091#issuecomment-1899071681:

> > You mention that in case of alignment mismatch -- when the new alignment is less than the old -- the implementation calls `mremap`.

>

> I was trying to note that this isn't really the case in practice, due to the semantics of Rust's allocator APIs. The only use of the allocator within the `in_place_collect` implementation itself is [a call to `Allocator::shrink()`](https://github.com/rust-lang/rust/blob/db7125f008cfd72e8951c9a863178956e2cbacc3/library/alloc/src/vec/in_place_collect.rs#L299-L303), which per its documentation [allows decreasing the required alignment](https://doc.rust-lang.org/1.75.0/core/alloc/trait.Allocator.html). However, in stable Rust, the only available `Allocator` is [`Global`](https://doc.rust-lang.org/1.75.0/alloc/alloc/struct.Global.html), which delegates to the registered `GlobalAlloc`. Since `GlobalAlloc::realloc()` [cannot change the required alignment](https://doc.rust-lang.org/1.75.0/core/alloc/trait.GlobalAlloc.html#method.realloc), the implementation of [`<Global as Allocator>::shrink()`](https://github.com/rust-lang/rust/blob/db7125f008cfd72e8951c9a863178956e2cbacc3/library/alloc/src/alloc.rs#L280-L321) must fall back to creating a brand-new allocation, `memcpy`ing the data into it, and freeing the old allocation, whenever the alignment doesn't remain exactly the same.

>

> Therefore, the underlying allocator, provided by libc or some other source, has no opportunity to internally `mremap()` the data when the alignment is changed, since it has no way of knowing that the allocation is the same.

|